Defining my product analytics flow

The what and why behind how I decided to track metrics for a new product

February 01, 2024 • 7 min read • gitpig.dev

tl;dr

- Decided on LogSnag as my go-to for basic product analytics, Simple Analytics for basic page analytics.

- Dub is a great option for URL shortening with custom domains.

- Added the LogSnag/Simple Analytics to my product template defaults.

- Added the LogSnag/Simple Analytics combo to my GitPig documentation, marketing pages and client app for certain actions.

- Began work on my contract testing product for microservice APIs.

- Thinking about Setapp as an option for releasing future desktop applications.

KISS analytics for products

After launching GitPig, I quickly found myself in a predicament: I had no way to know how the product was being used.

Initially, I figured that users at my workplace (where I decided to initially launch) would reach out to me about any problems they were having with the workflow within the app. Turns out that was too presumptuous.

I figured that I would add some basic tracking in after-the-fact (I did build it in two days), but turns out that a little tracking would have gone a long way to understanding basic usage e.g. how many downloads, how many active users etc. It also turns out that this is much more painful to rectify for desktop applications, since users must update to the latest version in order for this to start applying. So yeah, this was a problem.

It's still a problem for web, but much less so. Push the changes and then you'll start collecting metrics straight away.

That being said, I decided to figure out a simple analytics stack to work with going forward that I would add to my product repo template (for future products) and then backtrack to publish a new release for GitPig.

Figuring out the product analytics stack

In my previous post I'm launching 12 products in 12 months I sang my praises for how simple and straight-forward LogSnag looked. It certainly felt simpler than Amplitude to just start creating basic dashboards and capturing simple metrics, so it was a no-brainer to include.

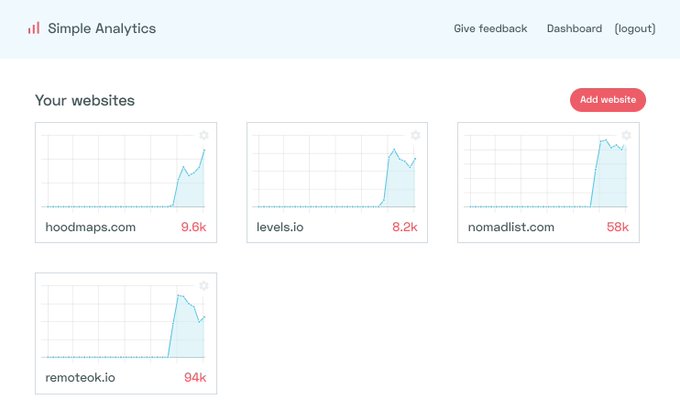

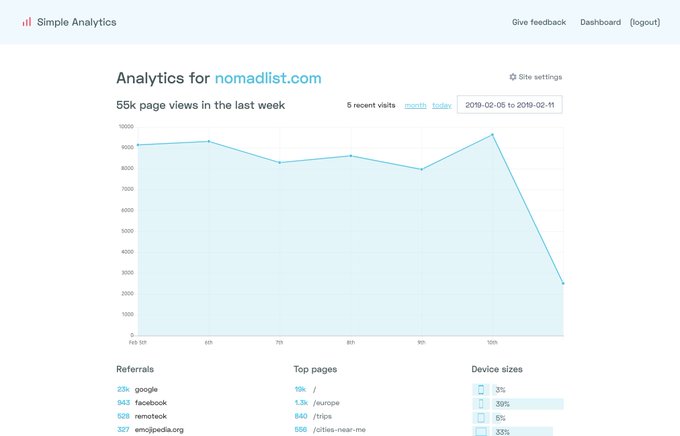

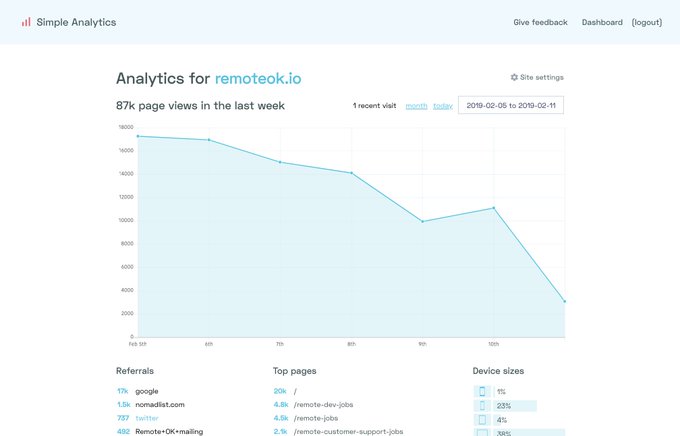

Afterwards, I had to figure out how to capture simple page metrics. Of course, there are the obvious heavy hitters like Google Analytics, but given all the negative press (and of course the scary amount of data they already have), I opted to go with Simple Analytics.

It's privacy-first, super simple to configure and easy to track simple page metrics like campaigns and referrals. Definitely enough for my use case.

Surprise, surprise. I also first heard about this from Pieter Levels.

In 💖 love with simpleanalytics.io, 📊 privacy-friendly analytics. You can use your own domain like sa.nomadlist .com/app.js to host the tracking JS. The dashboard is super simple and it's much easier to find what I need than in the monstrosity that is Google Analytics.

Simple Analytics are also open about their business journey, as seen here with their article How we hit our $30k ARR milestone . I don't want to pretend like kindred spirits, but it feels nice to see others sharing their journey.

Finally, I decided to use Dub.co for my link shortener. They're new-ish to the scene, but I watched Steven Tey's journey with the company from Twitter and have a soft spot for being a supporter. It pays to share your learnings in public.

He's also working on it full-time now after leaving Vercel.

Life update: I'm starting a company around Dub.co 🚀 We're building the link management infrastructure for you to create marketing campaigns, link sharing features, and referral programs. Here's how it all started – and where we're going:

So, that's it. That's the I-am-still-learning-this-thing-but-for-now-that-is-my-beginner product analytics stack.

Implementing the stack

My product template repository is a Turborepo monorepo. I won't go into the nitty gritty of all the different technologies used, but the long-story-short that is relevant for now is that I abstract a lot of the shared technologies into their own private package. That's basically what I did for everything mentioned about.

As far as the template goes, the configuration is more that the Simple Analytics script tag is in and ready for when I configure the website, while I need to manually begin adding in LogSnag tracking once I fork the repo and begin build a new product.

Implementing product analytics in for GitPig

As mentioned before, I retroactively realised my mistake with GitPig after the soft launch.

I haven't gone through the process of attempting a proper launch, so hopefully I haven't missed too much traffic from my guerilla promotion tactics. I am aiming to do that launch sometime in the near future (and will report how I go about it).

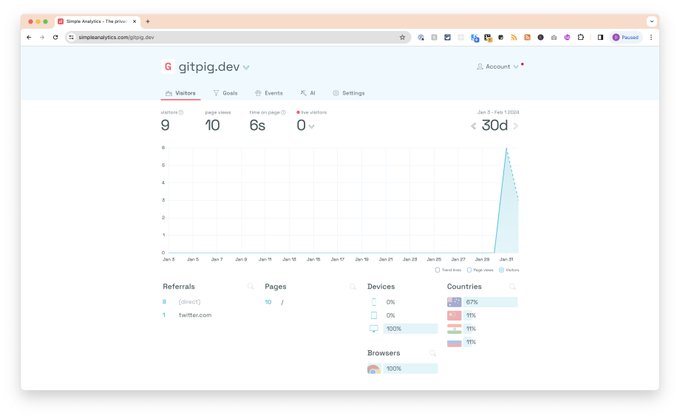

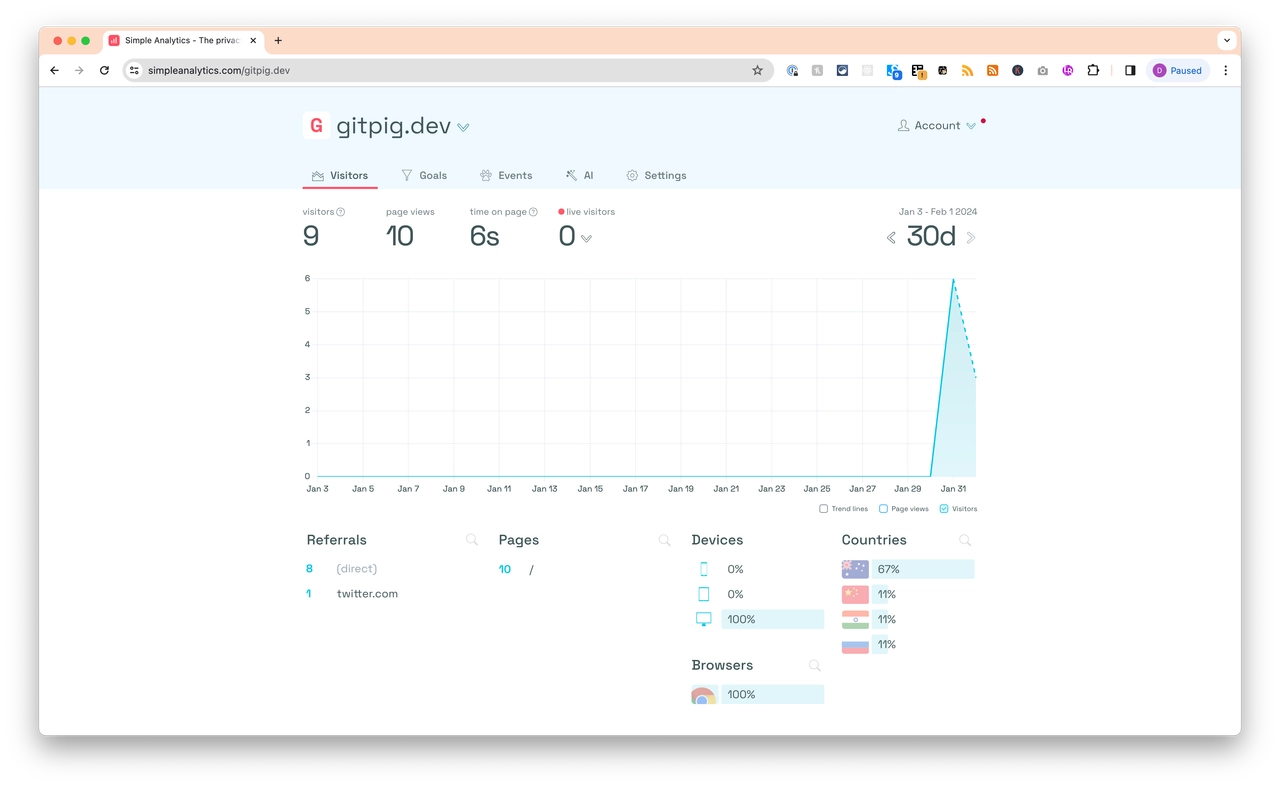

First, I configured Simple Analytics for the main marketing website (although I did not do it for the documentation website... hopefully that's okay).

After 24 hours, I've had 9 visitors (woohoo!).

It would have been more interesting to capture the early traffic I tried driving towards the website at work, but I'll do better going forward.

Better late than never, but I've finally added some basic analytics to my marketing page for gitpig.dev 😅 #buildinpublic #workingoutloud

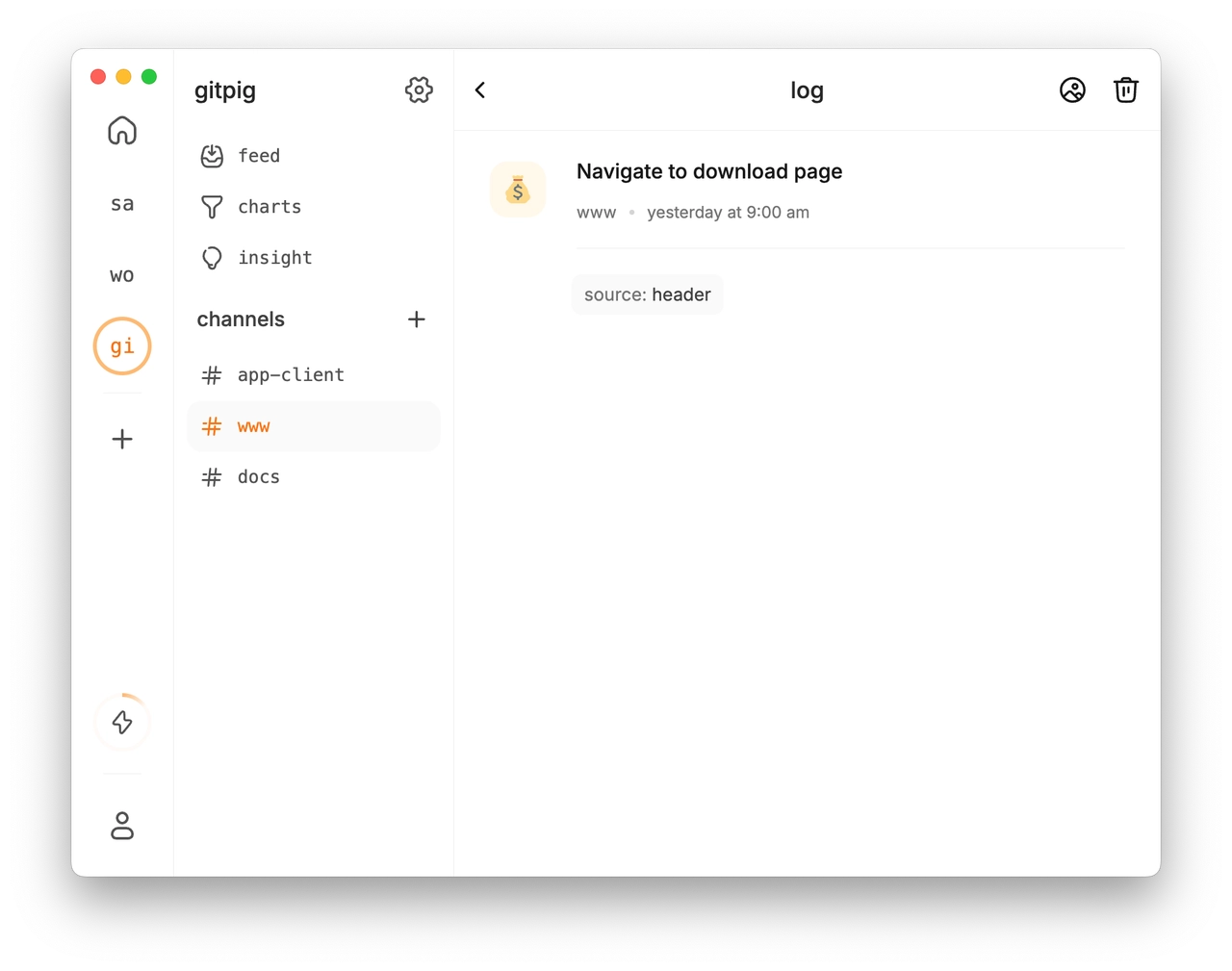

Next, I updated the GitPig marketing and documentation websites to track users being redirected to the GitPig releases page to get a better idea about how many might be downloading the binary. Each button that does the redirection also has a tag, so I can get a better idea if they went there from the header, footer or hero call-to-action links.

Of course, it would be better if I (1) moved the binary downloads to the marketing page to track actual downloads and (2) put more stats on active users on the actual application.

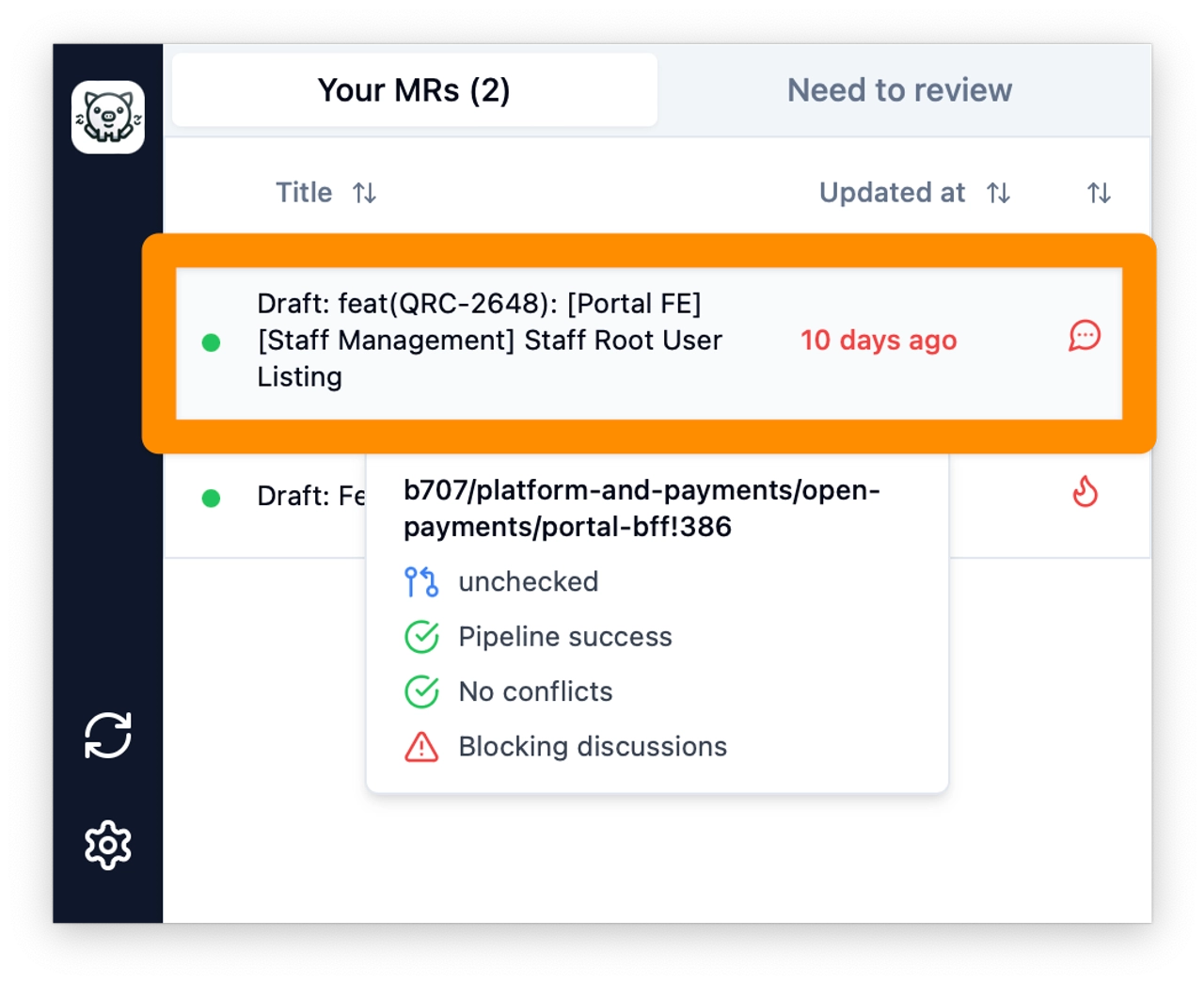

Finally, I configured tracking on the cross-platform desktop app. All I'm doing here is tracking whether or not users are clicking on the "merge requests" in the table to open up the URL on GitLab.

Those metrics are also being tracked on their own channel for the client in LogSnag.

Beginning work on the next application

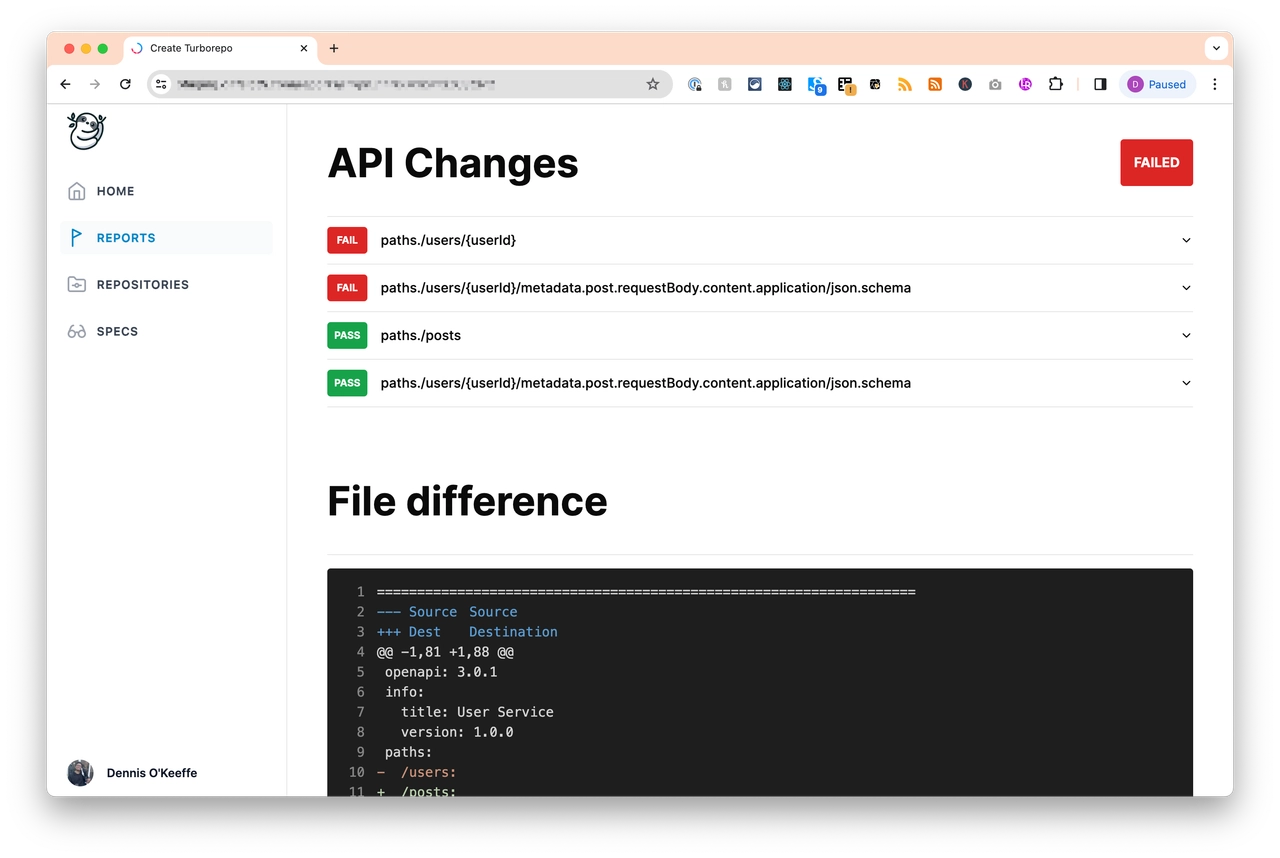

I've began work another solution for a problem I keep banging my head against the wall with at work: contract testing between APIs (application programming interfaces).

For those who are not developers, it might sound like a foreign word, but the easiest way to explain it is with an analogy.

Imagine you're planning to build a house and you've hired various teams to work on different parts: the electricians, plumbers, carpenters, and so on. Each team needs to know exactly what to do to ensure their part fits perfectly with the others. This is where the blueprint comes in. The blueprint is a detailed plan that everyone agrees on before starting work. It ensures that the electrical outlets will be in the right places for the carpenters' cabinets, the plumbing will fit the bathroom fixtures, and everything else comes together seamlessly.

Contract testing for APIs works similarly. In software development, different teams might work on different services that need to communicate with each other through APIs (Application Programming Interfaces). An API is like a contract that says, "If you give me this specific information in this specific way, I'll return this specific response."

Contract testing ensures that these APIs meet the agreed-upon "blueprint" or contract. Just like how the blueprint ensures the electricians don't put wires where a window is supposed to go, contract testing makes sure that one team's code sends the correct type of data in the correct format to another team's code, which then responds appropriately. This process helps catch any mismatches or errors early on, much like noticing a mistake in the blueprint before it causes a bigger problem during construction.

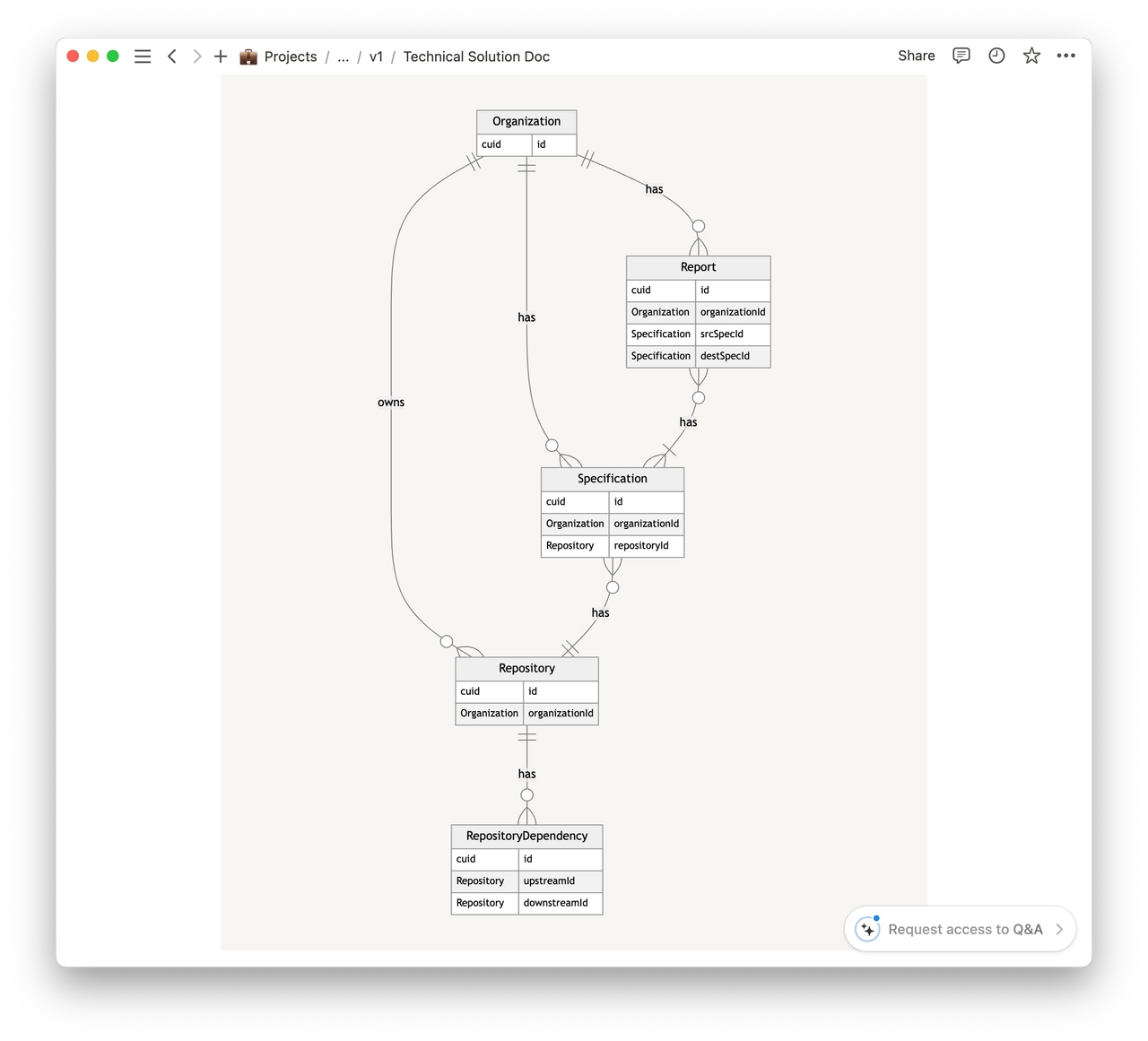

I started drawing up a simple technical solution in Notion:

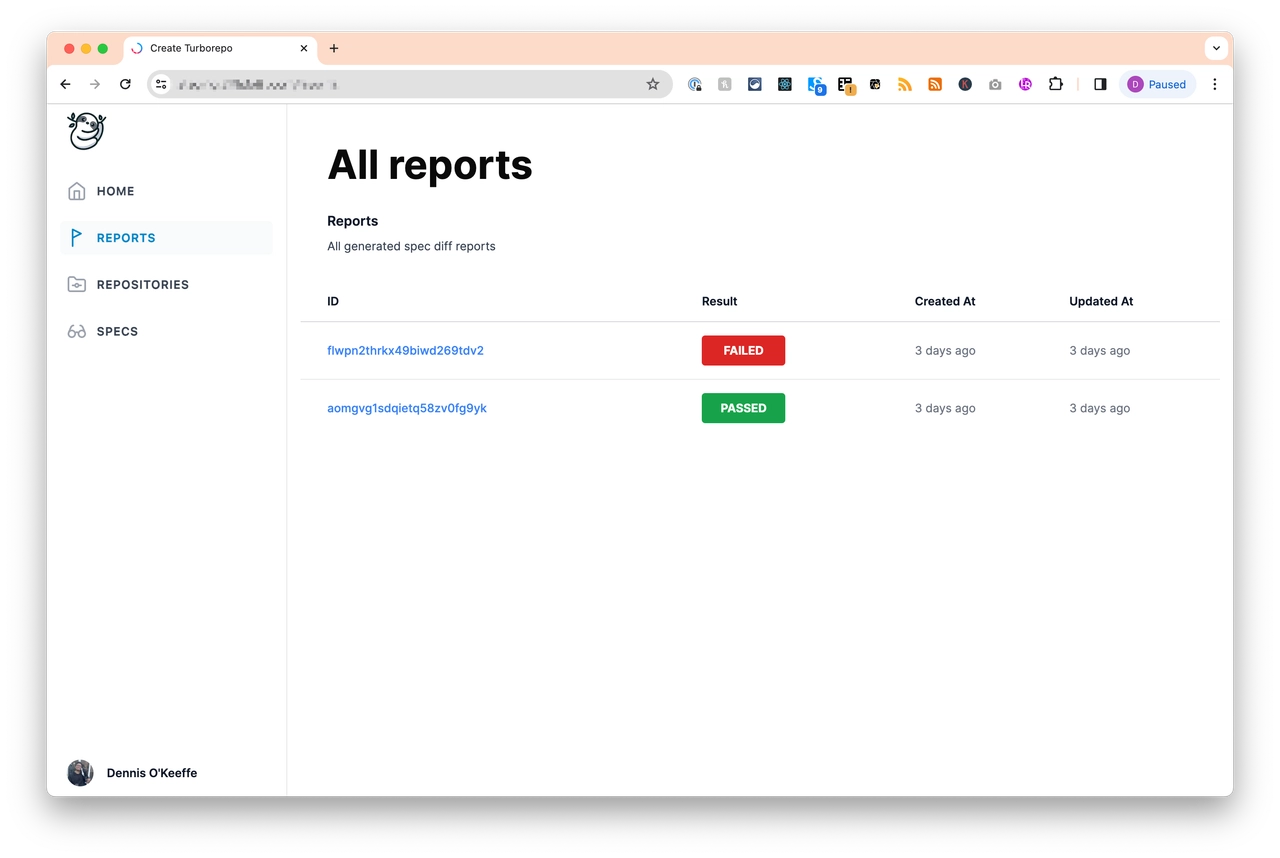

Afterwards, I forked my product template and smashed out a work-in-progress.

So far, it seems promising! I need to do a bit more cleanup for the MVP, but the proof-of-concept so far seems to be okay.

Roadwrap

Coming up on my agenda:

- Properly launching GitPig. Will be writing on how that goes!

- Exploring options with Setapp as a developer. I've been a subscriber for so long, and it is super awesome.

- Continuing work on the MVP for the OpenAPI diffing tool.

Stay foolish, pals.

-- Dennis